[Press Release] “Korean AI Framework Act must be improved to reflect human rights-based approach to AI”

1. On 12 May 2026, Korean Transnational Corporations Watch (KTNC Watch), Digital Justice Network, and the Institute for Digital Rights submitted the “Civil Society Opinion on a Human Rights-Based Approach to AI and the Korean AI Framework Act” to the UN High Commissioner for Human Rights on his visit to the Republic of Korea.

2. Volker Türk, the UN High Commissioner for Human Rights, made an official visit to Korea from May 12 to 14, during which he met with Korean human rights groups and government officials. This marks the first official visit by a UN High Commissioner for Human Rights to Korea in eleven years, after former High Commissioner Zeid Ra’ad Al Hussein’s visit in 2015.

3. The Office of the High Commissioner and other UN human rights bodies have called on governments and AI companies to adopt a “human rights-based approach to AI.” From this perspective, the Framework Act on the Development on AI and the Creation of a Foundation for Trust (hereinafter the “AI Framework Act”) has significant shortcomings and requires improvement.

4. In the Opinion submitted to the High Commissioner, the CSOs stress that the AI Framework Act should be revised to (1) stipulate AI operators’ obligations to protect affected persons, (2) guarantee the participation of affected persons in AI governance and decision-making, (3) prohibit the development and use of AI systems that pose unmitigable risks to human rights, (4) mandate fundamental rights impact assessments for high-impact private-sector AI operators and require consultation with the National Human Rights Commission of Korea, and (5) establish effective national complaint and remedy mechanisms for AI-related human rights violations.

5. The Korean government, including the Ministry of Science and ICT, and the National Assembly, which are currently reviewing amendments to the AI Framework Act, must work to improve the law by incorporating the human rights-based approach to AI that the United Nations has long advocated.

▣ Attachment: Civil Society Opinion on a Human Rights-Based Approach to AI and the Korean AI Framework Act

Civil Society Opinion on a Human Rights-Based Approach to AI and the Korean AI Framework Act

May 12, 2026

Digital Justice Network, Institute for Digital Rights, and Korean Transnational Corporations Watch

1. Korean civil society organizations support a Human rights-based approach to Artificial Intelligence (AI). A human rights-based approach to AI is “an approach that views people as individual holders of rights, empowers them and promotes a legal and institutional environment to enforce their rights and to seek redress for any human rights violations and abuses” (A/HRC/43/29, para.3). The UN Special Rapporteur on the promotion and protection of the right to freedom of opinion and expression has pointed to the difficulties surrounding AI transparency, accountability, and access to effective remedies, while emphasizing that companies have a responsibility to respect human rights in line with the UN Guiding Principles on Business and Human Rights, including conducting human rights due diligence (A/73/348, para.8; para.21). The Office of the UN High Commissioner for Human Rights (hereinafter the “OHCHR”) has particularly stressed the importance of applying the Guiding Principles on Business and Human Rights in the context of remedies for human rights violations (A/HRC/59/32).

2. In the Korean context, civil society also called for a human rights-based approach during the legislative process of the Framework Act on the Development on AI and the Creation of a Foundation for Trust (hereinafter the “AI Framework Act”), which was enacted and came into force on January 22, 2026. In particular, civil society emphasized the importance of protecting and providing remedies for “affected persons” who are potentially or actually negatively impacted in their human rights by AI. The bill passed by the National Assembly included provisions such as ▲ the definition of “impacted person” (§2(9)), ▲ impact assessments for high-impact AI (§35), and ▲ reporting and complaint procedures to competent authorities (§40), which may be seen as partially reflecting civil society’s concerns and demands.

3. Nevertheless, the AI Framework Act has been under criticism by civil society for still containing significant shortcomings from the perspective of a human rights-based approach to AI. The Ministry of Science and ICT(hereinafter the “MSIT”), which is currently the competent authority for this law, is operating a “Research Group on Institutional Improvement of the AI Framework Act” and reviewing directions for amending the Act. We believe that when amending the Framework Act on AI, improvements should be made to ensure full compliance with the human rights-based approach to AI required by international human rights norms.

4. The principle that companies have a responsibility to respect human rights is not only explicitly stated in the UN Guiding Principles on Business and Human Rights, but is also reflected in the Korean government’s National Action Plan on Human Rights (NAP). However, we have serious concerns as to whether the AI Framework Act properly reflects this principle. Accordingly, we call for the following improvements so that the AI Framework Act can ensure corporate accountability, consistent with international human rights standards, thereby providing effective protection and remedies for people affected by AI-related risks.

(1) Stipulate AI operators’ obligations to protect affected persons

- An “Impacted person” is defined as “a person whose life, physical safety, and fundamental rights are significantly affected by artificial intelligence products or artificial intelligence services” (§2(9)), and such persons “shall be entitled to be provided with a clear and meaningful explanation of the main criteria, principles, etc. utilized in deriving the final output of artificial intelligence, to the extent technically and reasonably possible” (§3②). However, these provisions are directed only at the State and are framed as broad policy responsibilities rather than binding obligations imposed on AI operators. As a result, the Act creates a significant accountability loophole by failing to impose binding legal obligations on AI operators. Although “Responsibilities of business operators regarding high-impact AI” (§34①(3)) provide for protective measures, the beneficiaries of such protections are limited to “users. (§34)” Put simply, this means that big tech companies conducting high-impact AI business bear no legal responsibility even if they fail to establish protective measures for affected persons.

- Under the Act, the subjects whom high-impact AI operators are obligated to protect do not include people negatively affected by AI. For example, while the Act defines hospitals, hiring companies, and financial institutions as “users” and imposes obligations on high-impact AI operators to protect them, it does not include patients, job applicants, or loan applicants within the scope of protection. Regarding this issue, on December 21, 2025, the National Human Rights Commission of Korea recommended legislative improvements, noting that “there remains a legal gap in protection measures for job applicants, patients, loan applicants, and others whose fundamental rights are significantly affected.” On January 5, 2026, the Board of Audit and Inspection also pointed out that “potential victims arising from the use of high-impact AI are excluded from the scope of protection,” and further noted that “in areas such as hiring, where the Ministry of Employment and Labor has not introduced guidelines, gaps in protection for persons affected by high-impact AI are anticipated, and differences in stakeholder protection are expected depending on how each competent ministry manages high-impact AI.”

- (Call for Improvement)

- Amend the Act to explicitly require operators of high-impact AI systems to establish and implement measures to protect affected persons and provide them with meaningful explanations.

- Apply the obligations imposed on high-impact AI operators not only to developers and providers, but also to businesses deploying and using high-impact AI systems for operational purposes, including hospitals, hiring companies, and financial institutions.

<Figure 1> Obligations of High-Impact AI Operators (Example: Employment Sector)

* source: MSIT(2026). Guidelines for Determining High-Impact AI Systems. pp.16-17.

(2) Guarantee the participation of affected persons in AI governance and decision-making

- The Act provides for national AI policy governance bodies, including the Presidential Council on National Artificial Intelligence Strategy (§7), Sectoral committees, special committees, and advisory groups (§10). However, these national AI governance bodies are not required to include affected persons or civil society organizations representing their interests. As a result, the composition of these committees is generally skewed toward industry interests. The National Human Rights Commission of Korea has recommended that the composition of the Sectoral committees and special committees under the National AI Strategy Council should ensure that human rights experts are included in a balanced manner alongside industry and technology experts.

- These provisions are also inconsistent with international human rights standards articulated by the United Nations. The UN Secretary-General has stated that “The development, diffusion and adoption of new technologies consistent with international obligations can be enhanced by effective and meaningful participation of rights holders. Towards that end, States should create opportunities for rights holders, particularly those most affected or likely to suffer adverse consequences, to effectively participate and contribute to the development process, and facilitate targeted adoption of new technologies. Through participation and inclusive consultation, States can determine what technologies would be most appropriate and effective as they pursue balanced and integrated sustainable development with economic efficiency, environmental sustainability, inclusion and equity” (A/73/348, para.47).

- Individuals and workers affected by AI should not be treated merely as passive recipients of technological deployment, but rather as rights-holders. Ensuring that affected persons can participate in and express their views on national or workplace AI policy decisions that affect them is essential to democratic governance in the age of AI.

- (Call for Improvement)

- Include affected persons or civil society organizations representing their interests in the National AI Strategy Council, Sectoral committees, special committees, and advisory groups.

- Require employer companies to inform workers and workers’ representatives before deploying or using high-impact AI in the workplace.

(3) Prohibit the development and use of AI systems that pose unmitigable risks to human rights

- In Korea, technologies such as real-time remote biometric identification in public spaces, predictive policing, emotion recognition in workplaces and schools, and autonomous weapons systems are being rapidly developed and deployed. However, the AI Framework Act does not prohibit any category of AI development or use. AI systems whose risks to human rights cannot be adequately mitigated should therefore be explicitly prohibited.

- The OHCHR has recommended that States “Expressly ban AI applications that cannot be operated in compliance with international human rights law and impose moratoriums on the sale and use of AI systems that carry a high risk for the enjoyment of human rights, unless and until adequate safeguards to protect human rights are in place” (A/HRC/48/31, para.59(c)).

- (Call for Improvement)

- Prohibit the development and use of AI whose risks to human rights cannot be mitigated. This should include real-time remote biometric identification in public spaces, predictive policing, emotion recognition in workplaces and schools, and fully autonomous lethal weapons.

(4) Mandate fundamental rights impact assessments for high-impact private-sector AI operators and require consultation with the National Human Rights Commission of Korea

- “AI human rights impact assessment” is a core human rights due diligence tool for preventing and mitigating the potential or actual adverse impacts of AI on human rights. Various international human rights standards, including those of the UN Special Rapporteur on freedom of opinion and expression and the OHCHR, have emphasized the importance of AI human rights impact assessments. Several jurisdictions, including the EU and the State of Colorado in the US, have also established systems to assess and address the impacts of AI on fundamental rights.

- The Act requires “Impact assessments of high-impact AI” on fundamental rights (§35). This has created an international misunderstanding that Korea’s AI Framework Act mandates human rights due diligence, but this is clearly not the case. First, under the Act, private-sector operators conducting high-impact AI business are subject only to an obligation to “endeavor.” Because this is merely an obligation to make efforts, it is not meaningfully enforceable and carries no effective sanctions. Public institutions are required to “give priority consideration” to such assessments, but impact assessments will only be meaningfully considered when reflected in procurement-related regulations. Given that the Act contains no provisions prohibiting certain AI systems and that the scope of high-impact AI under the Act is extremely narrow, the Act should be amended to mandate impact assessments for both public institutions and private-sector operators deploying high-impact AI systems.

- In addition, the Act completely fails to consider the AI value chain. AI-related risks arise throughout the entire value chain of AI systems. For this reason, the OECD Due Diligence Guidance for AI also calls not only on AI developers but on a wide range of actors involved in the deployment, operation, and use of AI to conduct responsible business conduct due diligence. However, the AI Framework Act treats businesses such as hospitals, hiring companies, and financial institutions—which actually use high-impact AI in ways that significantly affect individuals’ rights—as mere “users,” thereby creating a responsibility gap in the downstream segment of the value chain.

- Above all, for impact assessments to be effective, individuals and groups whose human rights are affected should be identified, and their participation and consultation should be ensured, particularly to gather their views on measures intended to prevent or mitigate adverse human rights impacts. In addition, to ensure transparency toward affected persons, the results of impact assessments for high-impact AI should be disclosed to the public to a certain extent. However, the current impact assessment framework does not adequately provide for consultation with affected individuals and groups or public disclosure of assessment results. As a result, impact assessments risk becoming merely formalistic compliance exercises rather than effective human rights safeguards.

- Furthermore, as recommended by the National Human Rights Commission of Korea, it should be verified whether high-impact AI is deployed and used in accordance with its intended purpose. Impact assessments should also be conducted not only before the provision of a “new” AI product or service, but also before any “significant functional modification.” Most importantly, there is a need to establish a legal basis allowing State authorities and other public bodies to request documents and materials from AI operators in order to verify the results of impact assessments.

- The impact assessment system is intended to “assess impacts on fundamental rights,” and the National Human Rights Commission of Korea recommended establishing a basis for the participation of the Commission and human rights experts in the process of formulating and revising standards and guidelines for high-impact AI impact assessments. However, the MSIT did not accept the recommendations by the National Human Rights Commission, Korea’s national human rights institution, and has not engaged in any consultation with the Commission regarding the content and methodology of impact assessments or even the operation of the system, including the qualifications of specialized institutions. There are serious concerns as to whether the impact assessment that excludes the national human rights institution can operate effectively in line with its intended purpose.

- (Call for Improvement)

- Mandate impact assessments for both public institutions and private companies operating high-impact AI systems, and reflect such requirements in procurement-related laws and regulations.

- Guarantee the participation of and consultation with affected individuals and groups throughout the impact assessment process.

- Disclose the results of impact assessments for high-impact AI systems to a certain extent.

- Require impact assessments to verify whether AI is deployed and used in accordance with its intended purpose.

- Require impact assessments to be conducted prior to any “significant functional modification.”

- Establish a legal basis allowing State authorities and other public bodies to request documents and materials from AI operators in order to verify the results of impact assessments.

- Consult with the National Human Rights Commission of Korea regarding the content and methodology of impact assessments and the operation of specialized institutions.

(5) Establish effective national complaint and remedy mechanisms for AI-related human rights violations

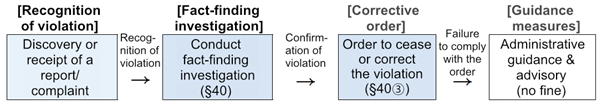

- The Act provides that the MSIT may receive reports or complaints regarding violations of the Act and conduct fact-finding investigations (§40). However, before actual sanctions can be imposed on AI operators that infringe human rights, the process must pass through multiple stages, including reporting, investigation, orders, and noncompliance with orders. Moreover, fact-finding investigations are discretionary measures that may be conducted only “where necessary” by a ministry whose primary mandate includes the promotion of the AI industry. This raises serious concerns regarding the effectiveness and independence of the remedy system. The MSIT has also stated that, following the enforcement of the Act on January 22, 2026, there will be a regulatory grace period of “at least one year,” making it unclear when actual enforcement against AI operators under the Act will begin.

<Figure 2> Process for Applying the Guidance Period

* source: MSIT(2025). Direction for Enacting Subordinate Regulations under the AI Framework Act, p.5.

- (Call for Improvement)

- Clarify and minimize the regulatory grace period.

- Ensure effective remedies by requiring competent ministries and relevant authorities — including the Ministry of Employment and Labor in employment-related cases — to respond promptly when AI-related human rights violations are identified or when reports or complaints are filed under the Act.

— end —

About the Organizations

Digital Justice Network launched in 1998 as the Korean Progressive Network Jinbonet, has provided network services for social movements and advocated for digital rights. The organization adopted its current name in 2025. Recently, it has focused its efforts on addressing the power of Big Tech and protecting the rights of those affected by the risks of artificial intelligence. The Digital Justice Network is a member of the Association for Progressive Communications (APC). http://www.digitaljustice.kr

Institute for Digital Rights was established in 2015 as a civil society-based research institute. It has been dedicated to promoting digital rights in Korean society and incorporating them into policy. Recently, the Institute has introduced a human rights-based approach to AI and worked to integrate this into national frameworks such as the AI Basic Act. Furthermore, it has been exploring various civil society activities to advocate for the rights and empowerment of individuals and groups affected by AI. http://idr.jinbo.net

Korean Transnational Corporations Watch is a Korean civil society coalition working on corporate accountability, business and human rights, and environmental justice in relation to the overseas activities and global supply chains of Korean multinational corporations. https://ktncwatch.org

Contact

For further information, please contact:

Chang, Yeo-Kyung

Executive Director, Institute for Digital Rights

idrsec@proton.me